Self-induced biases in working memory

How is information biased in an intelligent system? Inductive biases, along with the biased statistics of training data, might constitute obvious sources of biased representations. However, there is another plausible source: interference from one's own cognitive operations.

Our team was inspired by the experimental and theoretical studies investigating an interaction of discrimination and feature estimation [1-3]. Specifically, we were interested in how discriminatory decisions could induce biased feature representation. We hypothesized that the repulsive drive engendered by discrimination task would propagate to bias the memory representation in a decision-consistent manner.

To test the hypothesis, we built modular recurrent networks defined as follows.

$$ \begin{aligned} \tau_1 \frac{d\mathbf{r}_1}{dt} &= -\mathbf{r}_1 + f\left( \mathbf{W}_{11} \mathbf{r}_1 + \mathbf{W}_{12} \mathbf{r}_2 + \mathbf{W}^\mathrm{in}_1 \mathbf{u}_1 + \sqrt{2\tau_1} \xi \right) \\ \tau_2 \frac{d\mathbf{r}_2}{dt} &= -\mathbf{r}_2 + f\left( \mathbf{W}_{21} \mathbf{r}_1 + \mathbf{W}_{22} \mathbf{r}_2 + \mathbf{W}^\mathrm{in}_2 \mathbf{u}_2 + \sqrt{2\tau_2} \xi' \right) \\[8pt] \mathbf{z}^\mathrm{DM} &= \mathrm{softmax} \left( \mathbf{W}^\mathrm{DM} \mathbf{r}_1 \right), \ \ \mathbf{z}^\mathrm{EM} = \mathrm{softmax} \left( \mathbf{W}^\mathrm{EM} \mathbf{r}_2 \right) \end{aligned} $$where \( \tau_\ast \): time constants, \( \mathbf{r}_\ast \): recurrent unit activities, \( f \): activation function, \( \mathbf{W}_\ast \): synaptic weights, \( \xi \): independent Gaussian noises. Besides, \( \mathbf{u}_1, \mathbf{u}_2 \): discrimination boundary and stimulus inputs, \( \mathbf{z}^\mathrm{DM}, \mathbf{z}^\mathrm{EM} \): discriminatory decision-making (DM) and stimulus estimation (EM) outputs.

We trained the networks via backpropagation to the combined loss function \( \mathcal{L} = \mathcal{L}_\mathrm{DM} + \mathcal{L}_\mathrm{EM} \), where decision-making errors (\( \mathcal{L}_\mathrm{DM} \)) and estimation errors (\( \mathcal{L}_\mathrm{EM} \)) are defined as time-averaged cross entropy.

where \( p^\mathrm{DM}, p^\mathrm{EM} \): groun-truth outputs and \( \Theta\): discretized feature space.

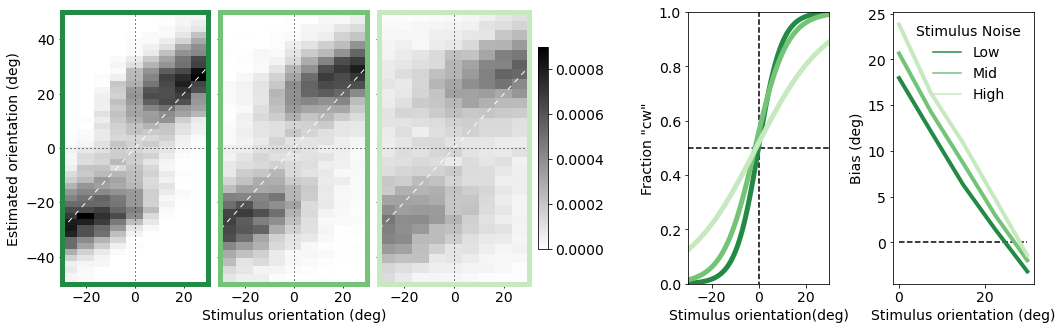

(Left) Distributions of feature estimates by trained networks generalized in three stimulus noise levels as a function of stimulus orientation relative to discrimination boundary. (Right) Psychometric functions and estimation biases (correct trials).

We were excited to find that the DM-to-EM feedback connectivity \(\mathbf{W_{21}} \) modulates the biases in estimates made by recurrent networks under the demand of joint accuracy. This results echo the previous findings, but extend our understanding by explicitly defining the objective function in relation to recurrent circuits.

Moreover, we found intriguing aspects in training dynamics and its relationship between feedback shape. We are currently working on the correspondence of the network predictions to neural representations we observed in human fMRI data. Stay tuned!.

Codes for training recurrent networks and analysis can be found in the repository.

References

[1] M. Jazayeri and J. A. Movshon, “A new perceptual illusion reveals mechanisms of sensory decoding,” Nature, vol. 446, no. 7138, pp. 912–915, 2007, doi: 10.1038/nature05739

[2] A. A. Stocker and E. P. Simoncelli, “A Bayesian model of conditioned perception,” Adv. Neural Inf. Process. Syst. 20 - Proc. 2007 Conf., 2009.

[3] L. Luu and A. A. Stocker, “Post-decision biases reveal a self-consistency principle in perceptual inference,” Elife, vol. 7, no. 2010, pp. 1–24, 2018, doi: 10.7554/eLife.33334